|

The robots of

tomorrow will be the direct result of the robotic research projects of

today. The goals of most robotic research projects is the advancement

of abilities in one or more of the following technological areas:

Artificial intelligence, effectors and

mobility, sensor detection and especially

robotic vision, and control systems.

These

technological advances will lead to improvements and innovations in

the application of robotics to industry, medicine, the military, space

exploration, underwater exploration, and personal service. The

research projects listed below are only a few of many robotic research

projects worldwide.

Artificial Intelligence

|

Cog, a humanoid robot from MIT. |

|

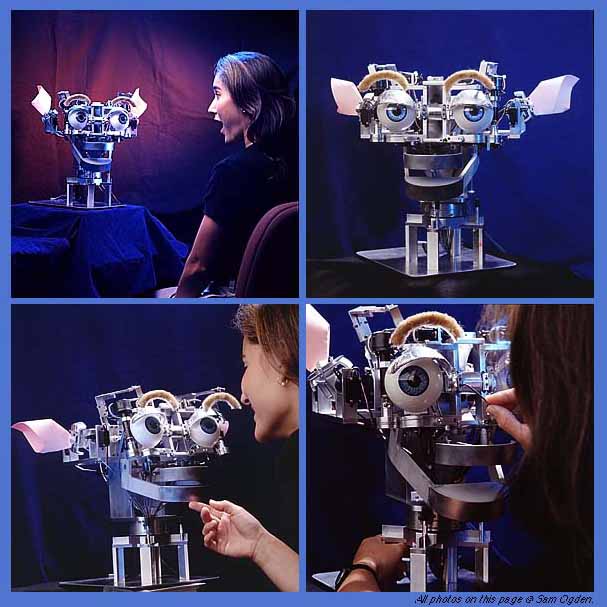

Two of the many research projects of

the MIT Artificial Intelligence department include an artificial

humanoid called Cog, and his baby brother, Kismet. What the

researchers learn while putting the robots together will be shared

to speed up development.

Once finished, Cog

will have everything except legs, whereas Kismet has only a

3�6-kilogram head that can display a wide variety of emotions. To

do this Kismet has been given movable facial features that can

express basic emotional states that resemble those of a human

infant. Kismet can thus let its "parents" know whether it needs

more or less stimulation--an interactive process that the

researchers hope will produce an intelligent robot that has some

basic "understanding" of the world.

|

|

This approach of creating AI by

building on basic behaviors through interactive learning contrasts

with older methods, in which a computer is loaded with lots of

facts about the world in the hope that intelligence will

eventually emerge.

Cog is 2 meters tall, complete

with arms, hands and all three senses--including touch-sensitive

skin. Its makers will eventually try to use the same sort of

social interaction as Kismet to help Cog develop intelligence

equivalent to that of a two-year-old child. |

|

Kismet is an autonomous robot

designed for social interactions with humans and is part of the

larger Cog Project. This project focuses not on robot-robot

interactions, but rather on the construction of robots that engage

in meaningful social exchanges with humans. By doing so, it is

possible to have a socially sophisticated human assist the robot

in acquiring more sophisticated communication skills and helping

it learn the meaning these acts have for others. |

|

|

Kismet has a repertoire of responses driven by emotive and

behavioral systems. The hope is that Kismet will be able to build

upon these basic responses after it is switched on or "born",

learn all about the world and become intelligent. Kismet has a repertoire of responses driven by emotive and

behavioral systems. The hope is that Kismet will be able to build

upon these basic responses after it is switched on or "born",

learn all about the world and become intelligent.

Crucial to its drives are the

behaviors that Kismet uses to keep its emotional balance. For

example, when there are no visual cues to stimulate it, such as a

face or toy, it will become increasingly sad and lonely and look

for people to play with.

Any advances made with Kismet

will be passed on to its big brother Cog, the robot brainchild of

Rodney Brooks, head of MIT's AI department. |

|

In

mimicking human intelligence, the goal is to make sure robots get

a brain and reasoning. An important pioneer in the field of AI is

Marvin Minsky.

Without a brain capable of

processing input, a robot cannot react to its environment. A brain

can be stimulated in hardware or software. Most robots at present

have software brains, meaning a computer with a program running.

These robots are connected to or equipped with a computer. A

drawback is the limited number of processes that can be run on

today's computers and the single purpose programs running on these

computers. The programs cannot change themselves. In other words,

learning is not possible.

A brain made out of hardware, or a number of processors will be

closer to reality. The brain will consists of several chips that

act both independently and as a group. The general belief is that

the real brain works as a neural network of lots of independent

processing units. Every chip in itself has a small program. It

will process information but also pass it on to other chips. The

program changes on a continuous basis. The network of chips is

quick and will adapt, so in contrast with the software brain, it

will learn.

An example of a hardware brain

is Robokoneko the robocat from Genobyte. It has a brain from a

machine, the CAM-machine. |

|

Effectors

and Mobility

|

Robot helicopter research began at the University of Southern

California in 1991 with the formation of the Autonomous Flying

Vehicle Project and continues to the present day. The first robot

built was the AFV (Autonomous Flying Vehicle). The AVATAR

(Autonomous Vehicle Aerial Tracking And Retrieval), was created in

1994. The current robot, the second generation AVATAR (Autonomous

Vehicle Aerial Tracking And Reconnaissance), was developed in

1997. The ``R'' in AVATAR changed to reflect a change in robot

capabilities. |

|

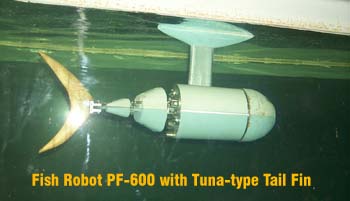

Without question, the fish is the

best swimmer in the world. That is why the Ship Research Institute

of Japan decided to build the Fish Robot. This project hopes to

apply what is learned while building and researching with the Fish

Robot to the design and construction of ships. |

|

|

Robots use electro-engines for movement. Engine parts are

relatively cheap and last long. Engines are applied to move arm,

turn wheels or move other parts, for instance camera's. Engines

are less usefull with walking robots. In that particular case

engines prove to be a weak part, a jumping robot is a mayor

challenge to engine parts. Human being use muscle, which contract

and expand, to move around. A muscle receive a signal form the

brain and contracts. Causing a joint, like the knee to move. Material to mimic a muscle is still a dream. Nitinol, an alloy

that consist of the metals nickel and titanium will shrink if an

electric current travels through the alloy, it will only contract

8% maximum. The downside, nitinol is very expensive en the contraction is

too little to allow it to be used to make walking robots. For the

time being walking robots will not use muscles or engines but

pneumatic of hydrolic technologies.

|

|

|

|

|

To

demonstrate advances in research and to stimulate scientist to

share progress the Robocup competition is organized a few times a

year. Robocup is a competition of Robot soccer teams. Movement,

pattern recognition, where's the ball, where's the goal, who is in

my team, all this and more is needed to score a goal. A simple

games becomes a challenge for a robot team. Besides moving and

finding the ball and team members the robots needs to define a

strategy and take lots of decisions in a short time frame. Robocup

has produced many advancements in both robotic effectors and

sensors. Who could have imagined that soccer would contribute to

robot research where robots eventually will be smart and capable

of cooperation with other to reach a goal? |

Sensor

Detection

|

Machine vision involves devices which sense images and

processes which interpret the images. Thomas Braunl of The

University of Western Australia provides an excellent example

of robotic vision research in both hardware and software. Machine vision involves devices which sense images and

processes which interpret the images. Thomas Braunl of The

University of Western Australia provides an excellent example

of robotic vision research in both hardware and software.

|

|

EyeBot is a controller for

mobile robots with wheels, walking robots or flying robots. It

consists of a powerful 32-Bit microcontroller board with a

graphics display and a digital grayscale or color camera. The

camera is directly connected to the robot board (no frame

grabber). This allows programmers to write powerful robot

control programs without a big and heavy computer system and

without having to sacrifice vision.

-

Ideal basis for

programming of real time image processing

-

Integrated digital camera

(grayscale or color)

-

Large graphics display

(LCD)

-

Can be extended with own

mechanics and sensors to full mobile robot

-

Programmed from IBM-PC or

Unix workstation,

programs are downloaded via serial line (RS-232) into RAM or

Flash-ROM

-

Programming in C or

assembly language

|

|

|

|

|

Improv is a tool for basic real time image processing with low

resolution, e.g. suitable for mobile robots. It has been developed

for PCs with Linux operating system. Improv works

with a number of inexpensive low-resolution digital cameras (no

framegrabber required), and is available from Joker Robotics.

Improv displays the live camera image in the first window,

while subsequent image operations can be applied to this image in

five more windows. For each sub-window, a sequence of image

processing routines may be specified. Improv is a tool for basic real time image processing with low

resolution, e.g. suitable for mobile robots. It has been developed

for PCs with Linux operating system. Improv works

with a number of inexpensive low-resolution digital cameras (no

framegrabber required), and is available from Joker Robotics.

Improv displays the live camera image in the first window,

while subsequent image operations can be applied to this image in

five more windows. For each sub-window, a sequence of image

processing routines may be specified. |

Sensor Based Planning incorporates

sensor information, reflecting the current state of the environment,

into a robot's planning process, as opposed to classical planning ,

where full knowledge of the world's geometry is assumed to be known

prior to the planning event. Sensor based planning is important

because: (1) the robot often has no a priori knowledge of the world;

(2) the robot may have only a coarse knowledge of the world because of

limited memory; (3) the world model is bound to contain inaccuracies

which can be overcome with sensor based planning strategies; and (4)

the world is subject to unexpected occurrences or rapidly changing

situations.

There already exists a large

number of classical path planning methods. However, many of these

techniques are not amenable to sensor based interpretation. It is not

possible to simply add a step to acquire sensory information, and then

construct a plan from the acquired model using a classical technique,

since the robot needs a path planning strategy in the first place to

acquire the world model.

The first principal problem in

sensor based motion planning is the find-goal problem. In

this problem, the robot seeks to use its on-board sensors to find a

collision free path from its current configuration to a goal

configuration. In the first variation of the find goal problem, which

we term the absolute find-goal problem, the absolute

coordinates of the goal configuration are assumed to be known. A

second variation on this problem is described below.

The second principal problem in

sensor based motion planning is sensor-based exploration, in

which a robot is not directed to seek a particular goal in an unknown

environment, but is instead directed to explore the apriori unknown

environment in such a way as to see all potentially important

features. The exploration problem can be motivated by the following

application. Imagine that a robot is to explore the interior of a

collapsed building, which has crumbled due to an earthquake, in order

to search for human survivors. It is clearly impossible to have

knowledge of the building's interior geometry prior to the

exploration. Thus, the robot must be able to see, with its on-board

sensors, all points in the building's interior while following its

exploration path. In this way, no potential survivors will be missed

by the exploring robot. Algorithms that solve the find-goal problem

are not useful for exploration because the location of the ``goal'' (a

human survivor in our example) is not known. A second variation on the

find-goal problem that is motivated by this scenario and which is an

intermediary between the find-goal and exploration problems is the

recognizable find-goal problem. In this case, the absolute

coordinates of the goal are not known, but it is assumed that the

robot can recognize the goal if it becomes with in line of sight. The

aim of the recognizable find-goal problem is to explore an unknown

environment so as to find a recognizable goal. If the goal is reached

before the entire environment is searched, then the search procedure

is terminated.

Control

Systems

|

Development of a hierarchical behavior control scheme for rovers

and mobile robots is currently underway. It attempts to model and

control mobile systems using distinct rule-based controllers and

decision-making subsystems that collectively represent a

hierarchical decomposition of autonomous vehicle behavior. This

research approach employs fuzzy logic, behavior control, and

genetic programming as tools for developing autonomous robots.

Complex, multi-variable fuzzy rule-based systems are developed in

the framework of behavior-based control for autonomous navigation.

Genetic programming methods are used to computationally evolve

fuzzy coordination rules for low-level motion behaviors. In

addition, embedded control applications are being developed for microrover navigation using conventional microprocessors and

specialized fuzzy VLSI chips. |

The movie Innerspace shows a

miniature spaceship travelling through the artery system of a human.

It is a nice illustration of the promise of Nano technology. Nano

Technology is a technique where miniature robots go to places humans

will never be able to travel. Nano technology is a new science where

robotics play a mayor part. Questions that needs to be solved because

of the very tiny mechinical parts: can a robot repair itself, how do

you control a nano robot, how does a nano robot move. Will it be able

to work autonomously. Will it be able so shift in shape. Is a nanan

robot a mechanical device or is it more like a microprocessor. Once

these questions are answered Nano technology will change medical

science for ever. Surgery will be performed in lots of cases by one or

more Nano robots that will travel inside the human body.

Third generation wireless networks like the Universal

Mobile telecommunication System (UMTS) are being developed to support

wide band services. A major scenario is to support such services of a

user roaming between a cellular terrestrial network and a satellite

Personal Communication Network (PCN) while maintaining the quality of

service during the hand-over process. And requiring some degree of

continuity of quality of service guarantees. This project will focus

on developing new protocols which uses artificial intelligent systems

to support such hand-over process. Further Seamless roaming and user

tracking using intelligent systems will be investigated.

In recent years, the

reduction of undesirable vibrations in the dynamic systems such as

airplanes, vehicles, tall buildings and off-shore structures has

become a crucial issue due to the increased social awareness of

comfort as well as the ever increasing heights of new inner city

buildings. With the advent of new construction materials and new

construction methods, the buildings and structures are becoming

taller, and more flexible. With a good design and under normal loading

conditions, the response of these structures to vibrations will remain

in the safe and comfortable domain. However, there is no guarantee

that in-service loads experienced by tall buildings and structures

will always be in the allowed range. The undesirable vibration levels

could be reached under large environmental loads such as winds and

earthquakes, and could adversely affect human comfort and even

structural safety. It is becoming critically important to suppress

dynamic responses of tall buildings and structures due to the strong

winds and earthquakes not only for their safety but also their

serviceability. When tall buildings and structures are flexible,

design performances may become impossible to achieve by conventional

design practice. Hence, additional devices are installed in tall

buildings and structures to compensate the dynamic responses caused by

environmental loads. As a result, new concepts and methods of

structural protection have been proposed.

Due to recent development of

sensors and digital control techniques, active control methods of

dynamic responses of tall buildings and structures have been

developed, and some of them have been implemented to actual buildings.

The precondition is however that the implementation is simple enough

to be realtime. In engineering applications with rule based systems

providing efficient results, the implementation is often easier than

its complex conventional counterpart.

The active vibration control in

the structural engineering has become known as an area of research in

which the vibrations and motions of the tall buildings and structures

can be controlled or modified by means of the actions of a control

system through some external energy supply. Compared with the passive

vibration control the active vibration control can more effectively

keep the tall buildings and structures safe and comfortable under the

various environmental loads such as strong winds or earthquake

hazards. This implies that the active vibration control can be

effective and adaptive over a much wider frequency range and also for

transient vibration, which is the reason to attract interest of the

researchers not only in structural engineering but also in control

engineering. Among many methods, that have been proposed, are active

mass drivers (AMDs), active tendon systems (ATS), and active variable

stiffness systems (AVSs).

Robot manipulators which have more

than the minimum number of degrees-of-freedom are termed ``kinematically

redundant,'' or simply ``redundant.'' Redundancy in manipulator design

has been recognized as a means to improve manipulator performance in

complex and unstructured environments. ``Hyper-redundant'' robots have

a very large degree of kinematic redundancy, and are analogous in

morphology and operation to snakes, elephant trunks, and tentacles.

There are a number of very important applications where such robots

would be advantageous.

While ``snake-like'' robots have

been investigated for nearly 25 years, they have remained a laboratory curiousity. There are a number of reasons for this: (1) previous

kinematic modeling techniques have not been particularly efficient or

well suited to the needs of hyper-redundant robot task modeling; (2)

the mechanical design and implementation of hyper-redundant robots has

been perceived as unnecessarily complex; and (3) hyper-redundant

robots are not anthropomorphic, and therefore pose interesting

programming problems. Our research group has undertaken a broadly

based program to overcome the obstacles to practical deployment of

hyper-redundant robots. |